4D Camera Could Improve Robot Vision, Virtual Reality and Self-driving Cars

San Diego, August 4, 2017 — Engineers at Stanford University and the University of California San Diego have developed a camera that generates four-dimensional images and can capture 138 degrees of information. The new camera — the first-ever single-lens, wide field of view, light field camera — could generate information-rich images and video frames that will enable robots to better navigate the world and understand certain aspects of their environment, such as object distance and surface texture.

The researchers also see this technology being used in autonomous vehicles and augmented and virtual reality technologies. Researchers presented their new technology at the computer vision conference CVPR 2017 in July.

“We want to consider what would be the right camera for a robot that drives or delivers packages by air. We’re great at making cameras for humans but do robots need to see the way humans do? Probably not,” said Donald Dansereau, a postdoctoral fellow in electrical engineering at Stanford and the first author of the paper.

The project is a collaboration between the labs of electrical engineering professors Gordon Wetzstein at Stanford and Joseph Ford at UC San Diego.

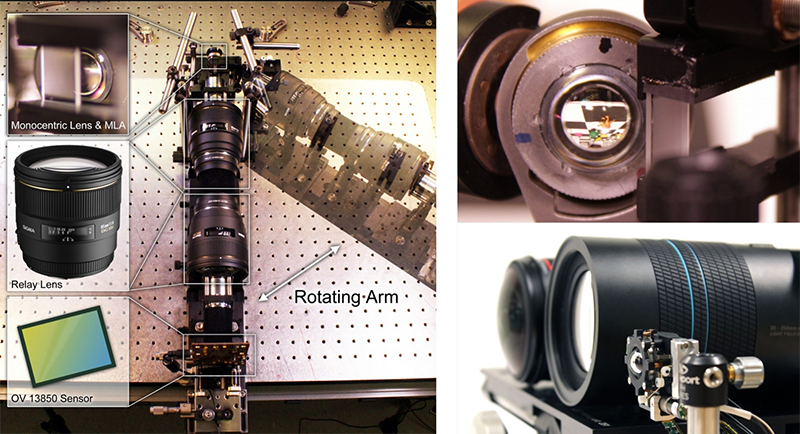

UC San Diego researchers designed a spherical lens that provides the camera with an extremely wide field of view, encompassing nearly a third of the circle around the camera. Ford’s group had previously developed the spherical lenses under the DARPA “SCENICC” (Soldier CENtric Imaging with Computational Cameras) program to build a compact video camera that captures 360-degree images in high resolution, with 125 megapixels in each video frame. In that project, the video camera used fiber optic bundles to couple the spherical images to conventional flat focal planes, providing high-performance but at high cost.

The new camera uses a version of the spherical lenses that eliminates the fiber bundles through a combination of lenslets and digital signal processing. Combining the optics design and system integration hardware expertise of Ford’s lab and the signal processing and algorithmic expertise of Wetzstein’s lab resulted in a digital solution that not only leads to the creation of these extra-wide images but enhances them.

The new camera also relies on a technology developed at Stanford called light field photography, which is what adds a fourth dimension to this camera — it captures the two-axis direction of the light hitting the lens and combines that information with the 2D image. Another noteworthy feature of light field photography is that it allows users to refocus images after they are taken because the images include information about the light position and direction. Robots could use this technology to see through rain and other things that could obscure their vision.

“One of the things you realize when you work with an omnidirectional camera is that it’s impossible to focus in every direction at once — something is always close to the camera, while other things are far away,” Ford said. “Light field imaging allows the captured video to be refocused during replay, as well as single-aperture depth mapping of the scene. These capabilities open up all kinds of applications in VR and robotics.”

“It could enable various types of artificially intelligent technology to understand how far away objects are, whether they’re moving and what they’re made of,” Wetzstein said. “This system could be helpful in any situation where you have limited space and you want the computer to understand the entire world around it.”

And while this camera can work like a conventional camera at far distances, it is also designed to improve close-up images. Examples where it would be particularly useful include robots that have to navigate through small areas, landing drones and self-driving cars. As part of an augmented or virtual reality system, its depth information could result in more seamless renderings of real scenes and support better integration between those scenes and virtual components.

The camera is currently at the proof-of-concept stage and the team is planning to create a compact prototype to test on a robot.

Paper title: “Wide-FOV Monocentric Light Field Camera” by Donald G. Dansereau and Gordon Wetzstein of Stanford; and Glenn Schuster and Joseph Ford of UC San Diego.

This research was funded by the NSF/Intel Partnership on Visual and Experiential Computing.

Related Links

Photonic Systems Integration Laboratory at UC San Diego

Electrical and Computer Engineering

Media Contacts

Liezel Labios, 858-246-1124, llabios@ucsd.edu